Here's a Tip for Picking Out Misleading Science News (Featuring AC/DC and the Beatles)

Another sniff test for detecting bad science and bad science reporting

Welcome to Range Widely, where I help you think a new thought for a few minutes each week. You can subscribe below.

If you find this post illuminating, you can share it using the button below.

Bad science, or bad reporting on science is a recurring topic here at Range Widely.

Today I want to highlight an example of really bad reporting on top of what looks like quite bad science.

“Surgeons who listen to AC/DC are faster and more accurate,” according to a bunch of headlines. But let’s pick on the New York Post. Here’s how the Post put the findings from a surgical journal:

“In trials, those [surgeons] listening to Highway To Hell and T.N.T. saw the time needed to make a precision cut drop from 236 seconds to 139.

And they did around five percent better on tests of accuracy.

Doctors were almost 50 percent quicker stitching up wounds when The Beatles’ hits Hey Jude and Let It Be were played in the background — but the positive effect was lost if those tracks were played loud.

Experts claim popular rock tunes boost performance by easing stress, relaxing muscles, combating anxiety, and even lowering blood pressure.”

Big if true.

Now, before we get to what the actual study said, I want to hammer home a point I’ve made previously: there are often clues in news articles that a study is not reliable. A typical clue is when a finding proclaimed in the headline is explained later in the story as something that only works in newly discovered, very specific conditions. Back to the Post:

“For hard rock music, the positive effect was especially noticeable when the music was played in high volume.”

That’s interesting. Apparently volume mattered differently for the Beatles than it did for AC/DC. So I read coverage in another publication, which added this tidbit:

“The Beatles’ Hey Jude and Let It Be also sped up the time taken to stitch up wounds by a whopping 50 per cent, however, only when the music was played at a low volume.”

Curiouser and curiouser, as Alice would say. So the surgery-enhancing effect of soft rock only works at low volume, while the effect is most pronounced for hard rock at high volume. Well of course! That’s how the music was meant to be played, and anything else would upset a surgeon’s worldview in the middle of surgery, right? Unlikely.

In January, I wrote a post on the famous “everything in your fridge causes and prevents cancer” study. I won’t go into it in detail here, but the upshot was: I recommend that when you see this sort of ultra-nuanced effect in a news article, it may be a sign that the researchers inappropriately (but often not maliciously) sliced and diced their data in order to create some tantalizing positive finding, which — given enough data and enough slicing and dicing — they will inevitably find among the many possible false positives.

Let’s say a study starts out asking whether music makes surgeons perform better, but the results show nothing. Dead boring. So then the researchers separate the data into surgeons who heard soft rock versus hard rock. Ok, now the hard rock shows an effect. But, hmmmm, still no effect for soft rock. But if you look only at the data when the music is low-volume, there’s an effect. Interesting! Headlines!

But the reality is often that the researchers just sifted the data so much that they were bound to find false positives. Again, I detail this process at greater length in this post. Whenever you get a whiff of slicing and dicing, raise your eyebrow.

But there are other problems in this particular case…

There Were Neither Surgeons Nor Wounds

The New York Post (and others) wrote that the surgeons got better at “stitching up wounds.”

According to the actual study, there were no surgeons, and there was no wound stitching. Rather, 30 medical students in Dresden “who showed a particular interest in surgery” were tested on four different tasks. Like “peg transfer”:

“Transfer six rubber triangles from the left to right side”

And “precision cutting”:

“Cut out a circle from a square piece of gauze accurately respecting the pre-marked line.”

I’m sure those are fine tasks, but they’re not “stitching up wounds.” But aside from the possibility of data sifting, and the fact that the not-surgeons were doing not-surgery, I want to cast a bigger aspersion on this study….

The Practice Effect

Some famous psychology findings have esoteric names. Not the “practice effect.” It means that you will often improve at a new task with practice. That might seem trivial, but it’s important for psychologists to recognize.

Let’s say I’m doing a study about whether eating bananas improves the skills of student drivers. I have students drive around, and I rate their driving. Then I give all of them bananas, and have them do the driving test again. They do better this time. Before jumping to the conclusion that bananas lead to better driving, I’m definitely going to want to know how much people normally improve upon taking the driving test repeatedly.

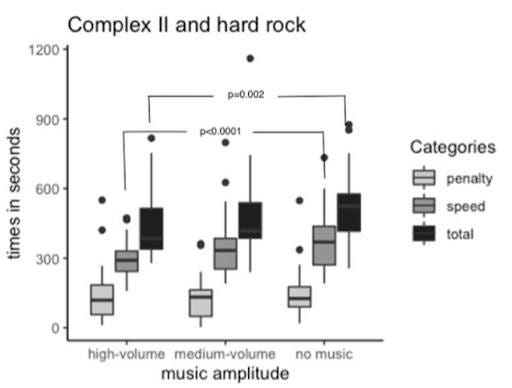

So here’s a figure from the surgery study:

The med students were slowest at cutting tasks (that’s “complex II”) with no music, medium-fast with medium-volume hard rock, and fastest with high-volume hard-rock. But, according to the study:

“The sequences of different music genres and amplitudes were not randomized.”

That’s a big deal. It means that every med student did the music trials in the same order. With the practice effect in mind, would you like to guess what that order was? Take a minute…

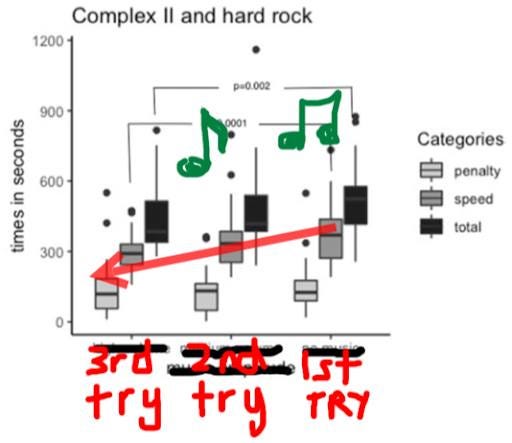

…If you guessed that first they had no music, then they had medium-volume music, and finally high-volume music, you win. (I think. The paper is a bit confusing on this point.) I have helpfully annotated the figure for what probably happened:

As Allen Iverson and Ted Lasso would say: We’re talking about practice.

Thank you for reading. If you think this post might be good for science-news literacy, please share it. (Tag me on Twitter or Instagram so I can thank you.)

And thanks to Jeff Novich for sending me the New York Post article, and to Drew Bailey for always being up for a chat about research methods.

As always, you can subscribe.

Until next week…

David

P.S. Having read the paper again, it is — to use scientific terminology — a hot mess. Let me know in the comments if you’re interested in another (shorter) post about how the main finding touted in these music/surgery news articles isn’t real, according to the scientific paper itself. In that hypothetical post, I’d also explain how you can catch the mistake in the paper, even though the authors missed it. And if you made it this far, congrats on your attention span!